ChatGPT Transforming Fraud Management

By Sanjay Bhakta with Centific (formerly Pactera EDGE)

What is ChatGPT?

ChatGPT, a conversation AI model by OpenAI, launched on November 30, 2022, rapidly gained notoriety with a million users signed-up in the first week of availability, lauded as performing “human like” dialogue answering questions and generating emails, with an estimated valuation of $29 B is taking fraud management by storm (Reimann, N., 2023). Automated responses synthesized by AI may be beneficial for operational activities such as fraud investigation, dispute management, chargebacks, and suite of other capabilities, thereby unlocking organizational efficiencies, optimizing OPEX, and emancipating our employees.

Digital Safety

The conversational AI model, ChatGPT, is a fine tuned model part of the GPT-3.5 series that was trained using reinforcement learning with human feedback (RLHF) and Proximal Policy Optimization (PPO). This enabled the AI trainers to enrich the quality of dialogue for exhibiting a more human centric conversation in relevant context. There are limitations to ChatGPT, such as generating nonsensical text, verbosity, response to adverse instructions, as well as biased behavior, resulting in false positives and negatives (OpenAI, 2022). While limitations are present, the nirvana of production deployment is appealing, echoing proliferation of automation, operational savings, and infusing ChatGPT throughout the workplace, spanning industries such as consumer electronics, e-Commerce, financial services, Healthcare, HiTech, retail, supply chain, travel & hospitality, and others. Caveat emptor, are industries and society comprehending the implications surrounding the digital safety of ChatGPT?

The AI Bill of Rights intent is to protect the civil rights and fairness of industries and consumers from any potential harm due to the usage of AI algorithms, however it precludes AI regulation. The protection from harm includes bias, discrimination, and breach of privacy, incorporated as five principles guiding the design of an automated system (The White House, n.d.). While the Bill is devoid of regulation it does not include standards, best practices, or tools, though includes an assessment to analyze the algorithmic impact (Metz, R., 2022). Similarly, there are many other assessment guides available such as from the World Economic Forum (WEForum, 2019) and Microsoft (AINow, 2018). Regardless of the assessment guides, there does not appear to be an automated analysis, quantifying the impacts of proximity or lack of adherence to guiding principles and recommendations for remediation. How may the guides apply to ChatGPT? Is there a role for ChatGPT in contact centers or back-office operations? Could we introduce risk, bias, or discrimination, else, mitigate these threats while incorporating ChatGPT?

Disrupting Operations

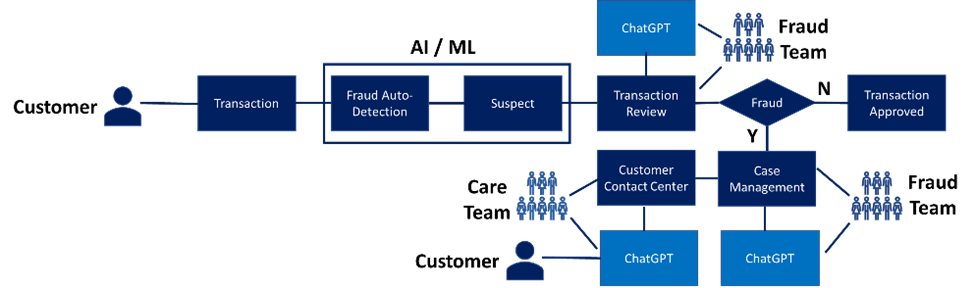

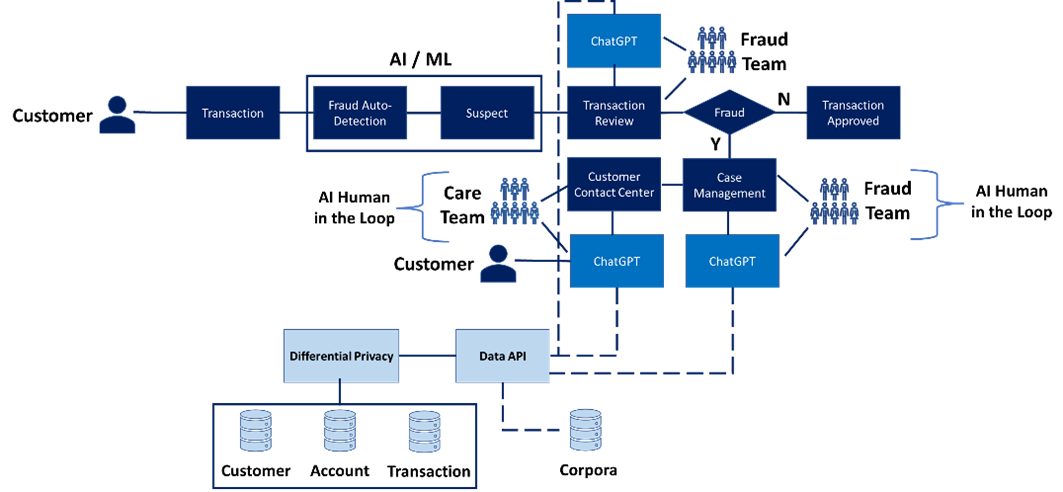

ChatGPT may hold promise in customer contact centers or fraud operations, automating tasks during customer inquiry or fraud investigation, Figure 1.0.

(Figure 1.0)

The automation may improve productivity, utilization, and overarching OPEX. Regarding the Customer Care Team or Fraud Team, tasks related to searches and event correlation may be automated through API integration with various repositories, however, PII pertaining to customer account and transaction history may conflict with data privacy requirements, if sent to ChatGPT. Presuming ChatGPT integration complies with data privacy, such as GDPR and PCI, where PII is protected by adopting NIST or other standards, it is expected that personal information is not transmitted and/or integrated with ChatGPT. Alternatively, in compliance with data privacy requirements, ChatGPT implementations may be interwoven within a given organization, where API integration is not external to the enterprise and may still use PII information, given its encrypted and secure. Prior to elaborating on a ChatGPT implementation, it’s imperative to visualize the inputs and outputs while corresponding with ChatGPT.

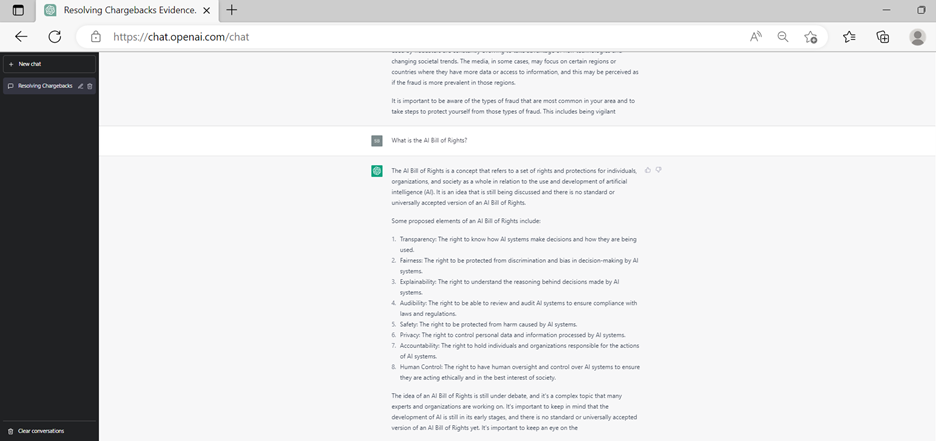

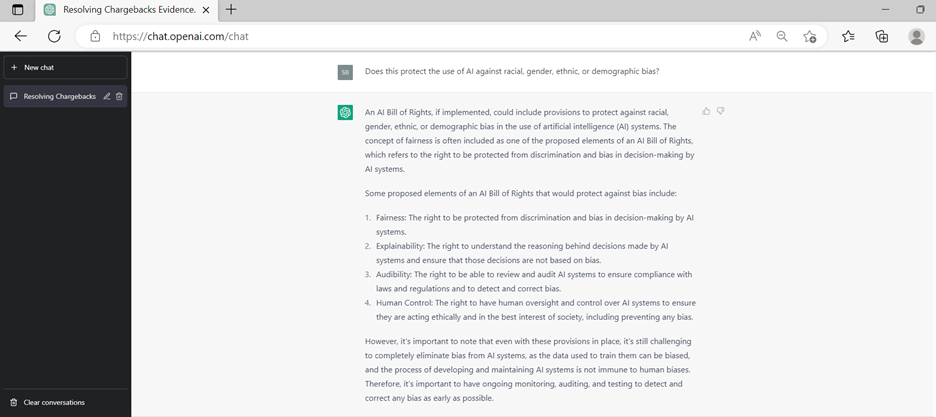

The data utilized to train ChatGPT appears to be comprehensive, including legislation, chargeback rebuttal, and many other policies. Figure 2.0 accurately captures the fundamentals of the AI Bill of Rights with ChatGPT responding with the essential principles while Figure 3.0 illustrates proposed elements to protect from bias.

(Figure 2.0)

(Figure 3.0)

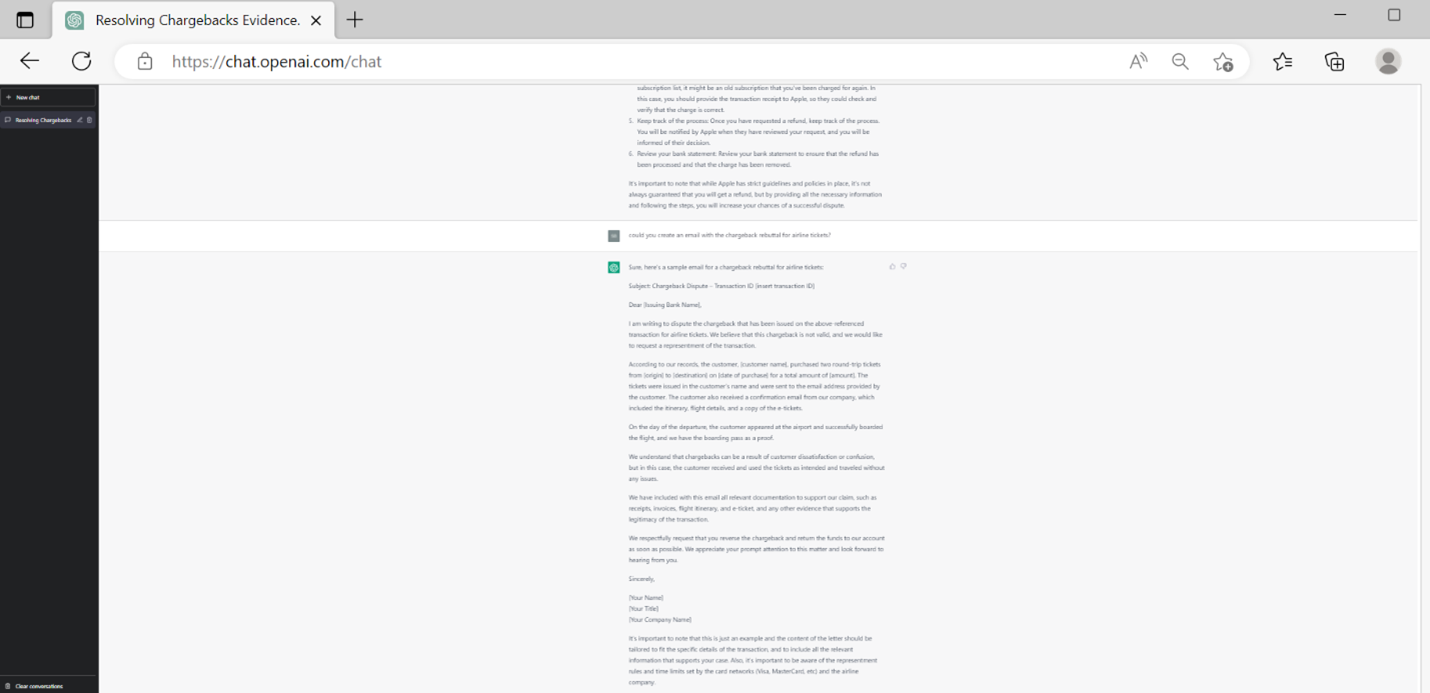

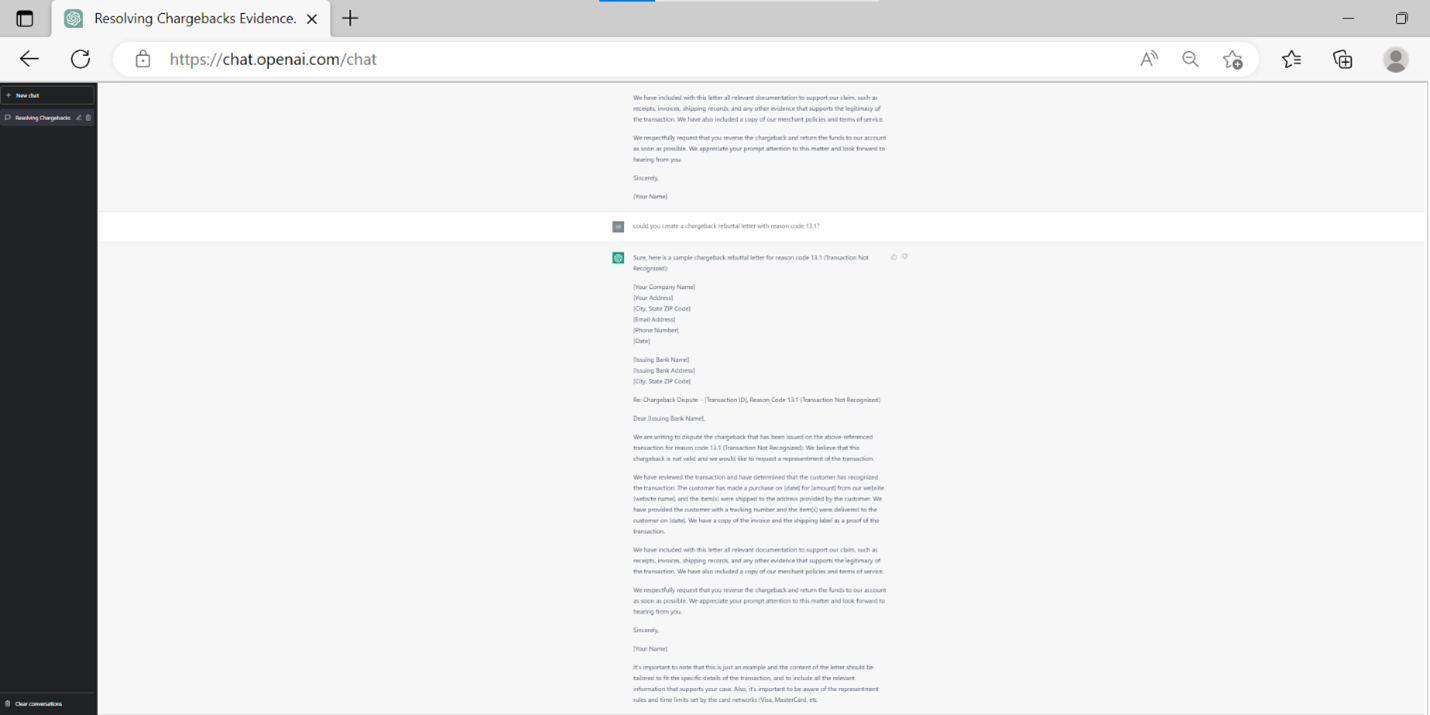

Regarding chargeback use cases, ChatGPT may generate content used in an email or letter sent to the respective with financial institutions for rebuttal. Figure 4.0 illustrates rebuttal content for the purchase of an airline ticket, and Figure 5.0 demonstrates content generated for dispute of transaction not recognized.

(Figure 4.0)

(Figure 5.0)

The scenarios using ChatGPT appear rudimentary, though they provide revelations to data privacy that may or may not be compromised depending on a given corporation’s technology landscape and response to data privacy. During correspondence with ChatGPT, referencing Figure 4.0 and 5.0, the content generated may be enriched with customer account, transaction, and flight information, ensuring data privacy requirements.

Best Practices Framework

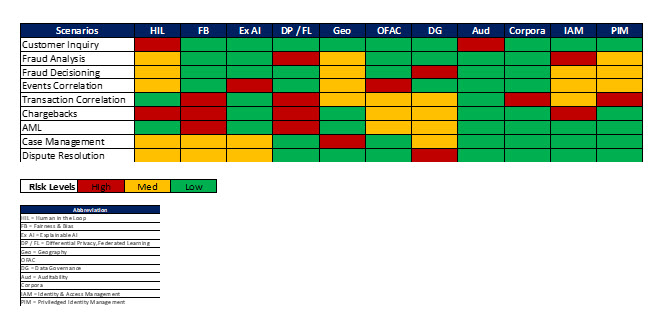

Enterprises seeking adoption of ChatGPT while complying with data privacy are better served by orchestrating their efforts through a framework. A codified framework, Figure 6.0, auto-assesses organizational scenarios or processes that instrument ChatGPT, providing a risk score illustrated by a heatmap reflecting non-compliance with company policies such as financial and data, as well as regulatory requirements such as HIPPA, GPDR, and others.

(Figure 6.0)

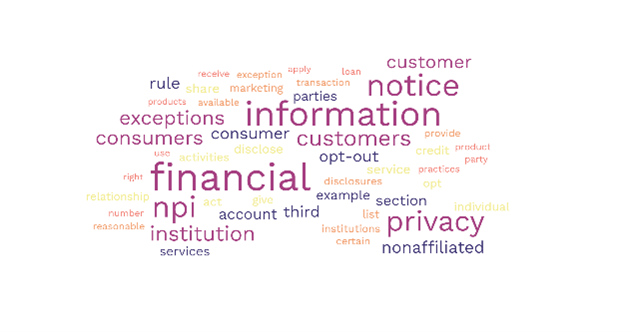

In addition, a word cloud, Figure 7.0, may further refine the specific facets of policies or regulations that require remediation.

(Figure 7.0)

Implementing an automated assessment for ChatGPT using a heatmap and word cloud within an MLOps program, accelerates the lifecycle of training, testing, validating, and deploying AI models at scale while incorporating adversarial techniques, ensuring mitigation of cybersecurity risks. MLOps, complemented with a taxonomy using ROI and OPEX ranked by recommendations, further amplifies digital safety for ChatGPT implementations. ChatGPT may be integrated within the workflows for Teams supporting customer contact centers or fraud operations, Figure 8.0.

(Figure 8.0)

Infusing human-in-the-loop provides a feedback mechanism that improves the quality of responses from ChatGPT while differential privacy or Federated Learning provide enhanced security to confidential data. The inclusion of these additional measures may increase costs of implementing ChatGPT, a tradeoff that most organizations in a regulated industry would need to assess in comparison with their risk tolerance and cybersecurity posture.

Conclusion

The announcement of ChatGPT may be a self-fulfilling prophecy, disrupting industries with potential of automating tasks, improving operational efficiencies, increasing productivity while optimizing OPEX. ChatGPT is more than a standard Chatbot due to extensive training on various datasets, industry standards, regulatory policies, FAQs, operational procedures, and many other formats of information. However, security pundits are alarmed with the text generation capabilities that are in proximity to human-like responses. Digital safety for ChatGPT may be employed by incorporating a best practices framework ensuring data privacy and secure operations of technology infrastructure complemented by human-in-the-loop capabilities resulting in greater accuracy of bot responses. Incorporating ChatGPT within the boundaries of enterprise organizations, such as availability through Azure, provides a robust and secure technology landscape in support of your operations and business processes, enabling Teams to focus on what matters most, Customers and elevating the experience.

Sanjay B. Bhakta - Global Head of Solutions - Centific | LinkedIn

About Centific

Centific is a global digital technology services company with solutions across various industries. Led by a people-first, intelligence-driven approach, Centific enables experiences that help brands thrive in a sustainable and meaningful way, working to create long-term value and loyal customers for our clients – now and in the future. With clarity of vision, technological expertise, and operational excellence, Centific is the partner of choice for those who want to run smarter - and those who want to change the race.

References

Reimann, N. (2023, January 5). ChatGPT Creator OpenAI Discussing Offer Valuing Company At $29 Billion, Report Says. Forbes.

OpenAI. (2022, November 30). ChatGPT: Optimizing Language Models for Dialogue.

The White House. (n.d.). Blueprint for an AI Bill of Rights

Metz, R. (2022, October 5). The White House released an ‘AI Bill of Rights’. Brookings

WeForum. (2019, January). AI Governance A Holistic Approach to Implement Ethics into AI. World Economic Forum.

AINOW. (2018 April). Algorithmic Impact Assessments: A Practical Framework For Public Agency Accountability

GDPR.EU (n.d.) GDPR Checklist for Data Controllers.

PCI Security Standards Council. (2022, March). PCI DSS v4.0

NIST. (2010, April). Guide to Protecting the Confidentiality of Personally Identifiable Information (PII). 800-122